You Can’t Protect Women With Laws Alone

The abuse of Collien Fernandes means Germany is rethinking its laws. The problem may be bigger than that.

On March 19, Der Spiegel ran a deeply reported feature based on hours-long interviews with German television host Collien Fernandes. By the time Fernandes stepped onstage at a rally in Hamburg on March 26 – exactly one week later – she was wearing a bulletproof vest.

The article described a woman who had endured years of online sexual abuse carried out through fake profiles, digital impersonation, and synthetic pornographic images. Now here she was, dressed for physical danger. If there had ever been any assumption that such violence is confined to a screen, it was gone.

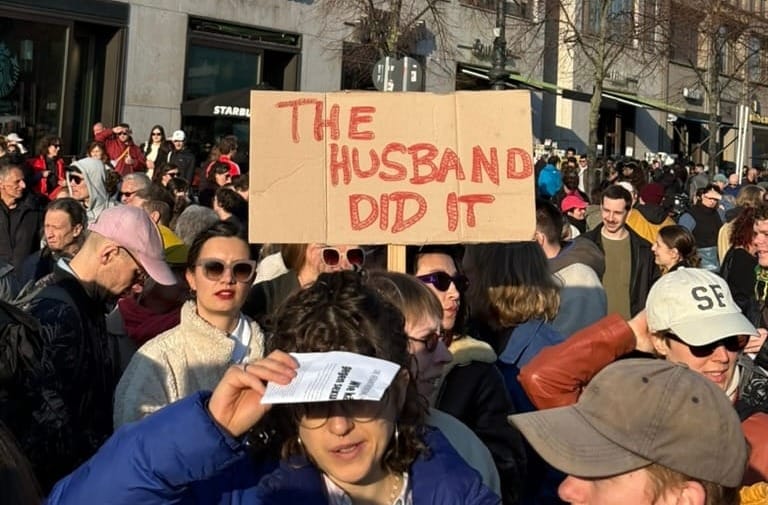

Fernandes was under police protection after receiving a wave of death threats. She had come to downtown Hamburg to speak in front of 22,000 people at a protest against digital sexual violence. Four days before that, thousands had turned out in Berlin to support her, filling the space in front of Brandenburg Gate. The cause for this outrage was that Fernandes has accused her ex-husband, actor Christian Ulmen, of creating fake profiles in her name on platforms like LinkedIn, impersonating her, arranging phone sex meetings with men, and sending pornographic deepfake images and videos featuring women who looked deceptively similar to her. “My body was stolen from me for years,” Fernandes told Der Spiegel, in a report headlined “You virtually raped me.” (In the interview, Fernandes recounts Ulman confessing his misdeeds to her on Christmas in 2024; Ulman’s lawyer has since denied all claims.)

Fernandes’ story has exposed a flaw in Germany’s legal system. She chose to file a criminal complaint against Ulmen in Mallorca, where the couple had lived for about three years before divorcing in 2026, because Spain has stricter laws against gender-based violence. Fernandes had already gone to Berlin police in November 2024 over fake accounts in her name, filing a complaint against persons unknown – meaning she reported the alleged crime before she said she knew who was behind it. That case was later transferred to Schleswig-Holstein on jurisdiction grounds. Prosecutors closed the investigation in June 2025, but reopened following the Der Spiegel reporting.

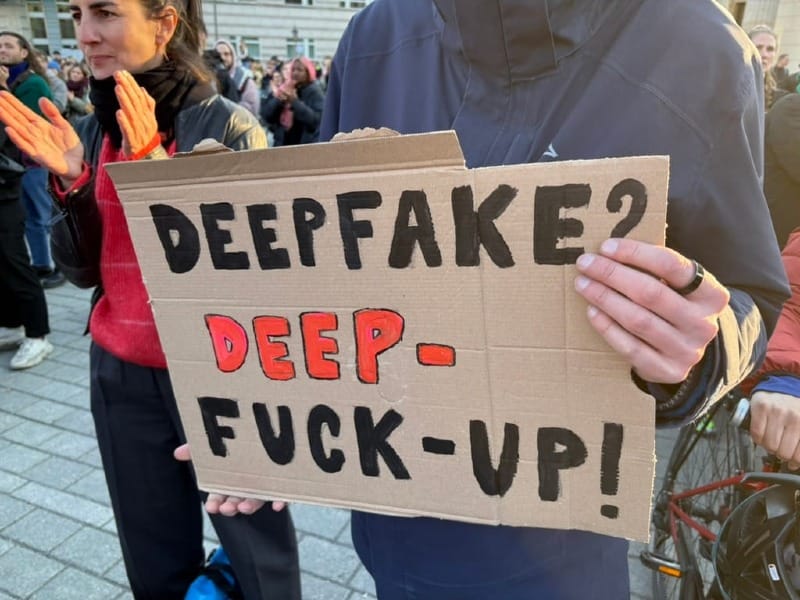

The details of the case — and the idea that the person tormenting you anonymously online could be sleeping in your bed — created a near-immediate wave of outrage across Germany. The anger is both moral and legal. Much of what Fernandes describes isn’t covered by the German criminal code. Rape and sexual assault provisions require physical contact. Rules on intimate recordings and the violations of rights through such recordings were written for media made by humans, and legal experts are debating whether they extend to deepfakes. The laws against stalking – which cover harassment and impersonation – come closer. The right to one’s own image under the Art Copyright Act is also available, though it carries lower penalties and isn’t designed to address sexualised humiliation. Even Germany’s new nationwide framework for combating domestic violence, passed in February 2025, doesn’t explicitly mention digital violence. So while Germany can prosecute fragments of abuse like Fernandes’, it still lacks a clear, enacted offense tailored to non-consensual deepfakes.

"It turns him on to humiliate me and present me in a way in my professional environment that he knew I would find horrible."

Politicians have been scrambling to promise legislative reform. Interior Senator Iris Spranger (SPD) called for a more effective law to protect victims of imaged-based digital sexual violence that would close the legal loophole. Federal Justice Minister Stefanie Hubig has promised a broader bill coming this spring, which would criminalise the production and distribution of AI-generated pornographic deepfakes, cover secretly-taken photos and videos, and include civil measures that make it easier for victims to act against platforms, including rights to information on perpetrators and account suspensions.

Two other proposals are already moving through the Bundestag: an older, narrower deepfake bill introduced before the Fernandes case became public, and a Greens bill on image-based sexualised violence that has already had its first reading and gone to committee. Greens lawmaker Lena Gumnoir recently told DW: “The fact that [Fernandes’] lawyer advised her to file a criminal complaint in Spain rather than in Germany is so telling of the situation here on the ground and must serve as a wake-up call to the federal government.”

But the Fernandes case does not matter only because it exposes a legal gap. It also revealed how quickly Germany reaches for the most legible fix – criminalise the content – while leaving the deeper machinery of misogynistic abuse, data extraction, and institutional failure largely untouched. Even Hubig, while opening the debate in the Bundestag, admitted that, “The technology is new, but the motive is ancient.”

Today, making a realistic deepfake is relatively easy. Users can upload source material, and then software can imitate a target’s likeness. What once required technical expertise is now available through browser-based tools for face swapping and voice cloning. That, combined with how much the technology has improved in recent years, has helped make an age-old form of violence feel frighteningly new.

For Ana Ornelas, Berlin-based advocacy officer at the Digital Intimacy Coalition, that newness is exactly what risks narrowing the debate in the wrong direction. “I’m pretty concerned with where I’m seeing things going,” she told HEIST. “I fear that concentrating on the content is taking away from how we can actually protect victims.”

The danger, she argues, is that lawmakers are mistaking the tool for the problem. “Criminalising sexualised content is not going to be enough. First of all, because what is sexualised content? … It's a really blurred line. Is it when genitals are showing? Is it when there is eroticism? This is the same taxonomy problem that we have around social media,” Ornelas said. “We’re just going to repeat the problem when it comes to AI-generated content and, most importantly, it’s not going to protect the victims.”

That is the trap now opening up in Germany: once the debate becomes a fight over what counts as sexualised or pornographic, the underlying dynamics of exploitation and coercion get harder to see. “Basically, AI-generated content is a remix of existing content, and this existing content that’s being poached to create deepfake porn comes from real performers and real bodies of real people,” Ornelas explained. “We should be focusing more on the privacy and the legislation around how models are using data, rather than paying attention to what kind of content is allowed.”

What Ornelas is pointing to is a deeper issue of extraction. The deepfake is the output, but the abuse begins earlier. What Christian Ulmen allegedly did merely automated and accelerated something much older: the extraction of women’s identity and images, and their reuse for sexual humiliation, control, and reputational damage.

Fernandes has since framed the abuse in exactly those terms. On Instagram, she wrote that Ulmen has a degradation fetish. “It turns him on to humiliate me and present me in a way in my professional environment that he knew I would find horrible,” she wrote. “Over the past 10 years or so, he has created several fake profiles under my name on social media. He contacted male users, strangers and men from my professional circle … It was important to him that everything seemed credible and that the erotic material appeared private, as if I had secretly filmed myself having sex.” She claims he carried on “an intense online affair” in her name with at least 30 men. In that sense, the abuse was never about manipulating content; it was about dominating her using her own image.

Ornelas puts it more bluntly: “The issue here is not AI-generated, non-consensual intimate image creation or sharing. The issue is targeted attacks and misogyny and gender-based violence. The deepfakes are just a symptom … We’re just putting a band-aid on an injury that’s been festering, and that’s not going to be enough.”

Collien Fernandes’ case is unusual, for much the same reason that her husband was able to interest dozens of unnamed men on the internet in her image: she’s recognisable. The vast majority of women who experience online sexual abuse don’t have the visibility to prompt a national response. Most women targeted by deepfakes or abused by their husbands won’t have cameras, crowds, or public pressure behind them. German institutions already struggle to investigate digital abuse; it’s hard to see how new laws alone will solve that.

Part of the problem with this form of digital sexual violence is that it’s not a finite act. A file can be downloaded, saved, altered, and recirculated endlessly. Courts can punish an offender, but they can never fully stop this cycle. Authorities also move slowly. Investigations take months, sometimes years, whereas it takes a matter of minutes to create deepfake videos and message them to anonymous people online, and a matter of hours to spread. Tracking the abuse can be time-intensive and nearly impossible to tie to one person.

Criminalising sexualised content is not going to be enough. First of all, because what is sexualised content? … It's a really blurred line.

Even when legislation does exist to compel investigations, people have to navigate the institutions meant to enforce them. “Criminal law is useless if victims don’t know where to get help, if the police don’t listen to them, if no investigations are conducted, and if counseling centers lack capacity,” Die Linke’s Anne-Mieke Bremer told Berliner Zeitung. She warned against constantly adding new “puzzle pieces” to the criminal code while ignoring how digitalisation is changing the experience of violence itself.

This is where political opportunism begins. Reactionary politics have a habit of smuggling other agendas in under the banner of protection. “I’ve been seeing some scary movements trying to hijack this conversation … to just create more surveillance and repression,” said Ornelas.

The signs are already visible. CSU legal policy spokesperson Susanne Hierl has called for expanded police investigative powers, including the storage of IP addresses. Chancellor Friedrich Merz recently used a question about digital violence to draw a connection between violent crime and immigration, even though the Fernandes case involves two German nationals. The case also resurfaced debate over a real-name policy online – the idea that users should have to appear under their legal identities rather than pseudonymously – which critics say would limit free speech, encourage unregulated discrimination, and require people to provide personal data that could be weaponised to harm marginalised people or immigrants.

For Berlin-based pornography producer and Free Speech Coalition Europe (FSCE) co-founder Paulita Pappel, the danger isn’t only legal overreach but the way debates like this can slip from protecting victims into regulating sex itself. “Politicians are finally addressing this issue, yet there’s the fear of them co-opting feminist demands into prohibitionist laws that would further limit our sexual freedom instead of protecting it,” she told HEIST. Attempts to define and then tighten restrictions on sexualised content can very quickly allow bad-faith lawmakers to put policies in place that unevenly criminalise the female body (similar to Instagram’s no-nipple rule) and crack down on sexual education.

Often, such governmental restrictions hit sex workers first, Pappel explained. And when cases like Fernandes’ break into public consciousness, little discourse is saved for the people whose images are repurposed without their consent or compensation. “A deepfake often has two victims, and we must stop making one of them invisible,” Pappel said.

That erasure is exactly what makes the current legislative push so fraught. On March 25, European sex work advocacy group Berufsverband erotische und sexuelle Dienstleistungen e.V. (BesD) and FSCE released a joint statement calling for comprehensive reform of sexual criminal law with the active participation of sex workers. “We welcome the fact that politicians are taking action. But we caution against purely additive legislation – more paragraphs, more prohibitions – which does not address the root problems but deepens them,” they wrote.

Still, Pappel isn’t arguing against legislation itself. “There’s a culturally deep-rooted problem underlying these cases of sexualised digital violence. Misogyny, a patriarchal system, an old sexual moral and old laws are a part of it,” she said. “Legislation can not change a culture immediately, but it is a powerful tool for supporting progress.”

New laws can name the harm. They can create routes for redress and signal that something once trivialised will be taken seriously. What they cannot do is dismantle the culture that perpetuates this abuse. That work is slower, messier, and less satisfying than passing a bill and calling it a day — and Berlin should know this already. According to the city’s police, there were more than 19,200 cases of domestic and partner violence in 2024 – the highest in a decade. Seventy-one percent of the victims were women. A new nationwide survey, conducted jointly by the family ministry, the interior ministry, and the federal criminal police office, suggests the official numbers are only a sliver of reality: fewer than 10% of violent experiences are even reported. The same study found that 20% of women and 13.9% of men reported experiencing digital violence – including cyberstalking, identity theft, coercive online contact, and digital monitoring – in the past five years. The Berlin State Commission against Violence now counts digital violence as a distinct category.

A serious response to the Collien Fernandes case would include building systems that victims can actually use: funded counseling centers, police and prosecutors trained in digital evidence, fast-response units that can preserve and trace online material before it spreads, and ways for victims to control the removal of abusive material paired with safeguards against broad censorship of sexual content. It would also mean moving closer to the source of the harm: stricter rules on how images and likenesses are scraped, stored, and reused, as well as consent and compensation standards for training data.

“We need to address the systemic violence that keeps popping up, and it’s going to pop up on every new technology that we create,” Ornelas told HEIST. “This is not going to be the last time, unless we look at unpacking why this misogyny is so rampant.”

Germany may pass new laws. It probably will. The real question is whether it will mistake that for having solved the problem that forces Collien Fernandes, when she gets up to speak about improving digital safety for women, to put on a bulletproof vest.

If you’ve experienced digital violence, support is available in multiple languages through Berlin’s BIG Hotline (030 611 03 00).

Real journalism costs money. Support HEIST with a donation.